Connector sync rules

editConnector sync rules

editUse connector sync rules to help control which documents are synced between the third-party data source and Elasticsearch.

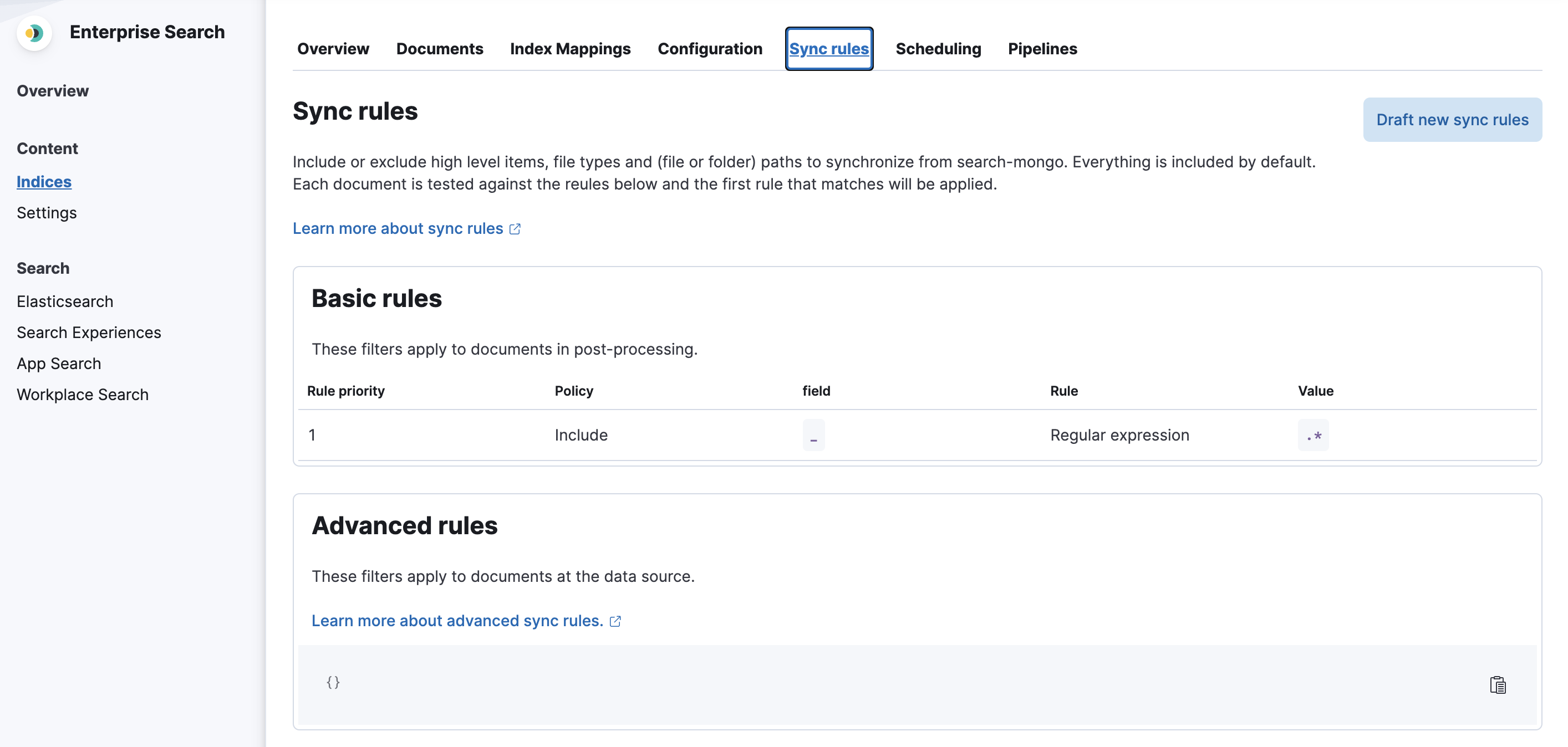

Define sync rules in the Kibana UI for each connector index, under the Sync rules tab for the index.

Sync rules apply to native connectors and connector clients. Sync rules do not apply to Workplace Search connectors, although similar features are available in Workplace Search. See the Workplace Search documentation.

There are two types of sync rules:

- Basic rules - these rules are represented in a table-like view.

- Advanced rules - these rules cover complex query-and-filter scenarios that cannot be expressed with basic rules. Advanced rules are defined through a source-specific DSL JSON snippet.

General filtering

editIt is difficult to discuss sync rules without first level-setting the concepts of data filtering in general.

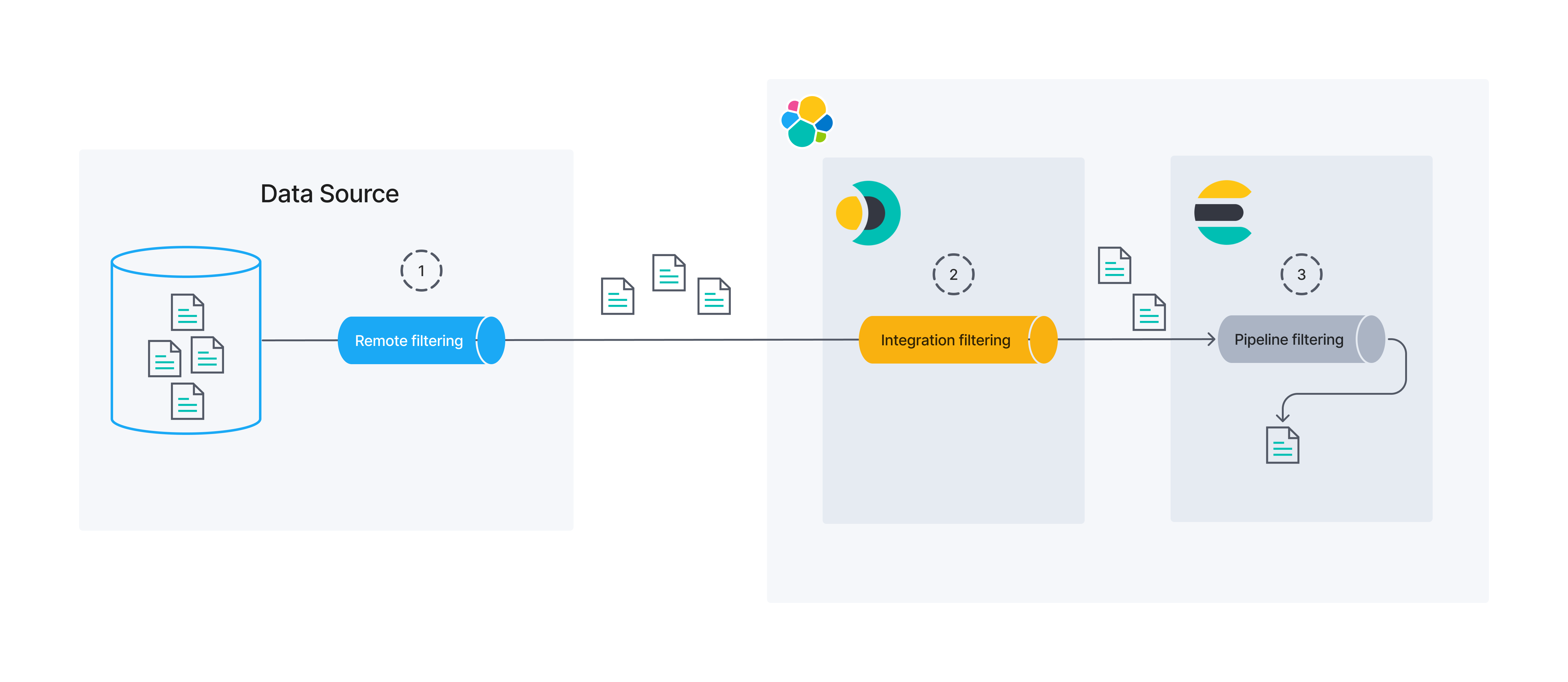

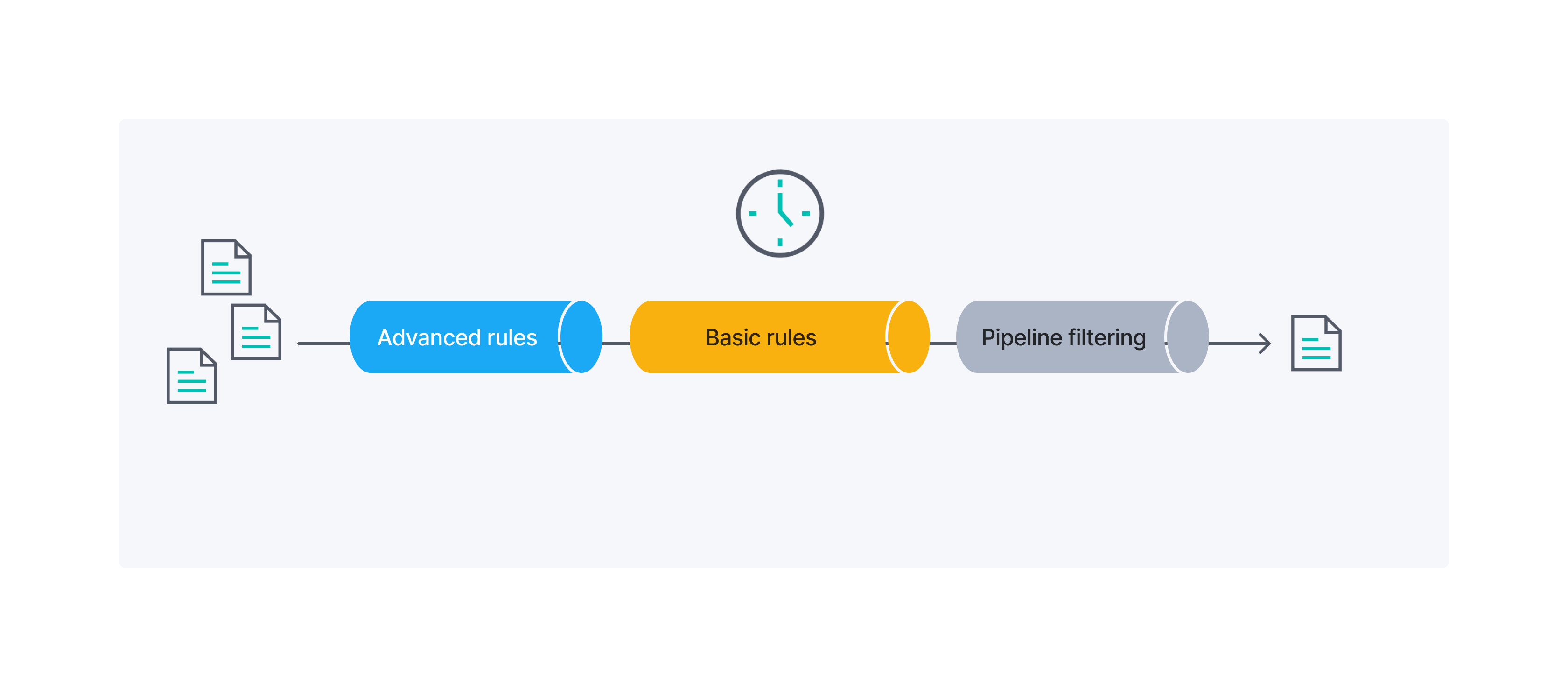

This diagram is helpful for illustrating that data filtering can occur in several different processes/locations. First, data might be filtered at its source. We call this "remote filtering", as the process limiting the data runs external to Elastic. Next, we have the bucket of "integration filtering". This covers data filtering that occurs in the process that acts as a bridge between the data’s source and Elasticsearch (its final destination). Filtering that takes place in the Enterprise Search connectors is an example of "integration filtering". Finally, Elasticsearch itself provides the capability for filtering data right before persistence via its ingest pipelines.

This guide will not focus much on ingest pipeline filtering. However, sync rules can be used to influence both remote and integration filtering.

At this time, basic sync rules are the only way to control integration filtering for connectors. However, remote filtering covers a far broader topic than connectors alone could influence. For best results, work closely with the owners and maintainers of your data source to ensure that your source data is well organized and the source is optimized for the types of queries the connectors will issue to it.

Sync rules overview

editOften times, your data lake has far more data than you want exposed to the end user. For example, you may want to search a product catalog, but not include vendor contact information, even if the two are co-located for business purposes.

The optimal time to filter data is early in the data pipeline, for two reasons:

- Performance: It’s more efficient to send a query to the backing data source than to obtain all the data and then filter it in the connector. It’s faster to send a smaller dataset over a network and to process it on the connector side.

- Security: The query-time filtering is applied on the data source side, so the data is not sent over the network and into the connector, which can limit the exposure of your data.

In a perfect world, all filtering would be done as remote filtering.

In practice, however, this is not always possible. Some sources do not allow robust remote filtering. Others do, but require special setup (building indexes on specific fields, tweaking settings) that may require attention from other members of your business.

With this in mind, sync rules were designed to influence both remote filtering and integration filtering. Your goal should be to do as much remote filtering as possible, but integration is a perfectly viable fall-back. By definition, the remote filtering is applied before the data is obtained from a third-party source. Integration filtering is applied after the data is obtained from a third-party source, but before it is ingested into the elasticsearch index.

All sync rules occur on a given document before any ingest pipelines are run on that same document. Therefore, you could use your ingest pipelines for any processing that must occur after integration filtering has occurred.

Basic rules

editEach basic rule can be one of two "policies": include and exclude.

Include rules are used to include the documents that "match" the specified condition.

Exclude rules are used to exclude the documents that "match" the specified condition.

A "match" is determined based on a condition defined by a combination of "field", "rule", and "value".

The Field column should be used to define which field on a given document should be considered.

The following rules are available in the Rule column:

-

equals- The field value is equal to the specified value. -

starts_with- The field value starts with the specified (string) value. -

ends_with- The field value ends with the specified (string) value. -

contains- The field value includes the specified (string) value. -

regex- The field value matches the specified regular expression. -

>- The field value is greater than the specified value. -

<- The field value is less than the specified value.

Finally, the Value column is dependent on:

- the data type in the specified "field"

- which "rule" was selected.

For example, if a value of [A-Z]{2} might make sense for a regex rule, but much less so for a > rule.

Similarly, you probably wouldn’t have a value of espresso when operating on an ip_address field, but perhaps you would for a beverage field.

Basic rules examples

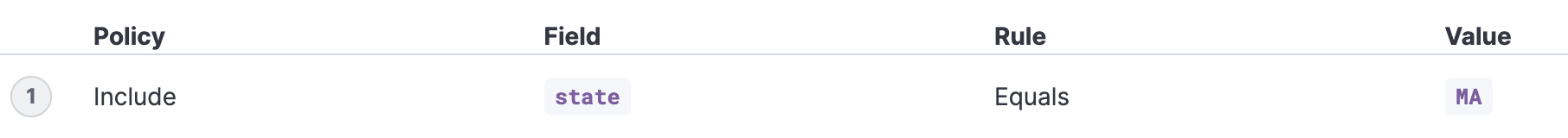

editExample 1

editInclude only documents that have a state field with the value MA. This is a case-sensitive match.

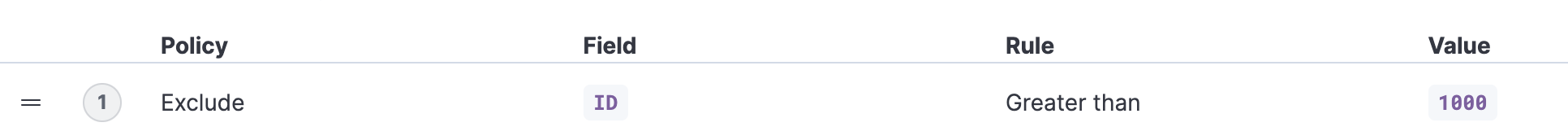

Example 2

editExclude all documents that have an ID field with the value greater than 1000.

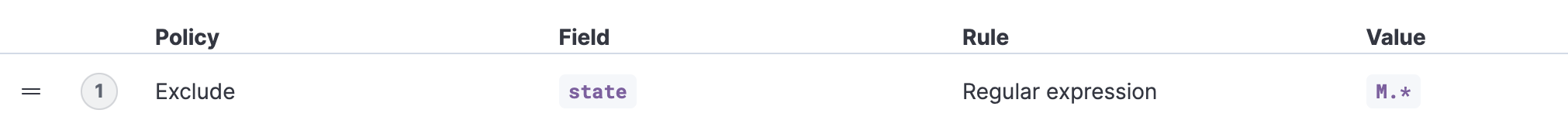

Example 3

editExclude all documents that have a state field that matches a specified regex.

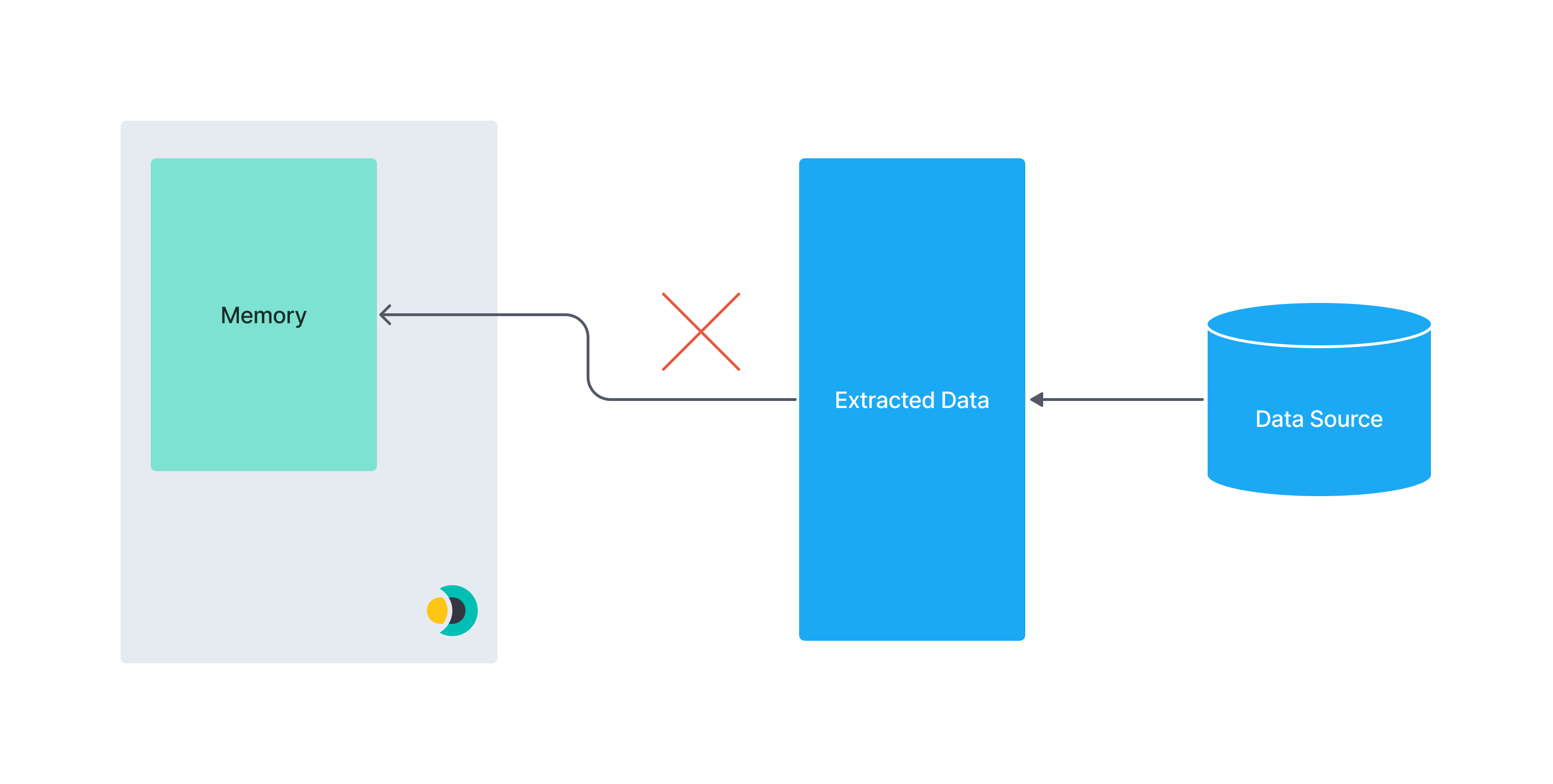

Performance implications

edit- If you’re relying solely on basic rules in the integration filtering phase the connector will fetch all the data from the data source

- For data sources without automatic pagination, or similar optimisations, fetching all the data can lead to memory issues. For example, loading datasets which are too big to fit in memory at once.

The native MongoDB connector provided by Elastic uses pagination and therefore has optimised performance. Just keep in mind that custom community built connector clients may not have these performance optimisations.

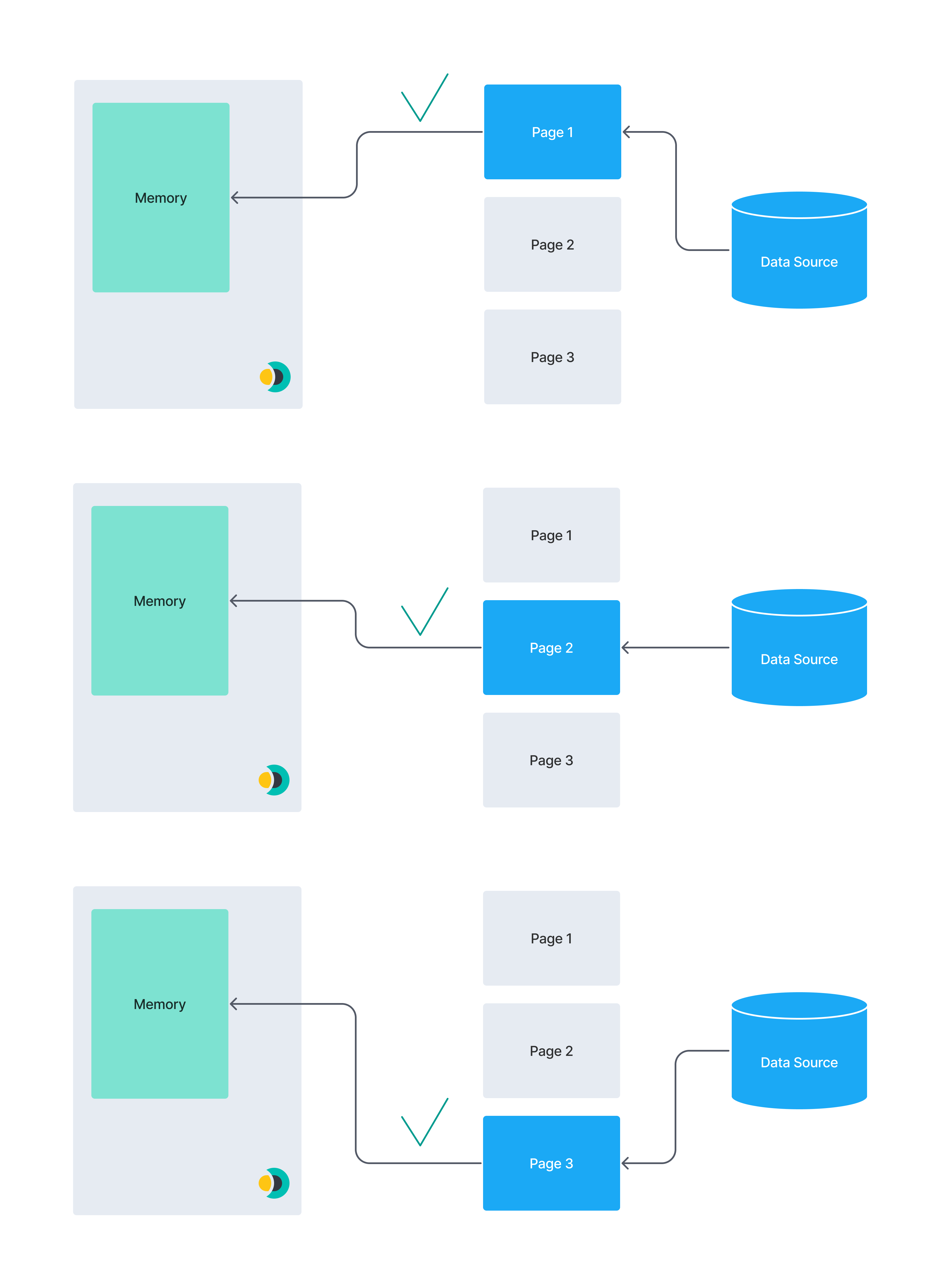

The following diagrams show the concept of pagination. A huge data set may not fit into the memory of a connectors instance. If you break this data set up into smaller chunks they’ll fit into memory one after another.

vs

Basic rules in the remote filtering phase

editBecause remote filtering happens at data source query time, it is highly specific to the datasource.

If the connector cannot determine how to combine one or more basic rules into a single query to the data source, the unused basic rules will not be used remote filtering, but will instead be applied in integration filtering. If you observe this happening and want to tune performance, consider using the advanced rules to fine-tune your remote filtering.

Advanced rules

editAdvanced rules overwrite any remote filtering query that could have been inferred from the basic rules. If an advanced rule is defined, any defined basic rules will be used exclusively for integration filtering.

Advanced rules are only used in remote filtering. You can think of advanced rules as a language-agnostic way to represent queries to the data source. Therefore, these rules are highly source-specific.

Each connector supporting advanced rules provides its own DSL to specify rules. Refer to the documentation for each connector for details.

Interplay between Basic Rules and Advanced rules

editYou can also use basic rules and advanced rules together for filtering a data set.

The following diagram provides an overview of the order in which advanced rules, basic rules, and pipeline filtering, are applied to your documents:

Example

editIn the following example we want to filter a data set containing apartments to only contain apartments with specific properties. We’ll use basic and advanced rules throughout the example.

A sample apartment looks like this in the .json format:

{

"id": 1234,

"bedrooms": 3,

"price": 1500,

"address": {

"street": "Street 123",

"government_area": "Area",

"country_information": {

"country_code": "PT",

"country": "Portugal"

}

}

}

The target data set should fulfill the following conditions:

- Every apartment should have at least three bedrooms

- The apartments should not be more expensive than 1000/month

- The apartment with id 1234 should get included without considering the first two conditions

- Each apartment should be located either Portugal or Spain

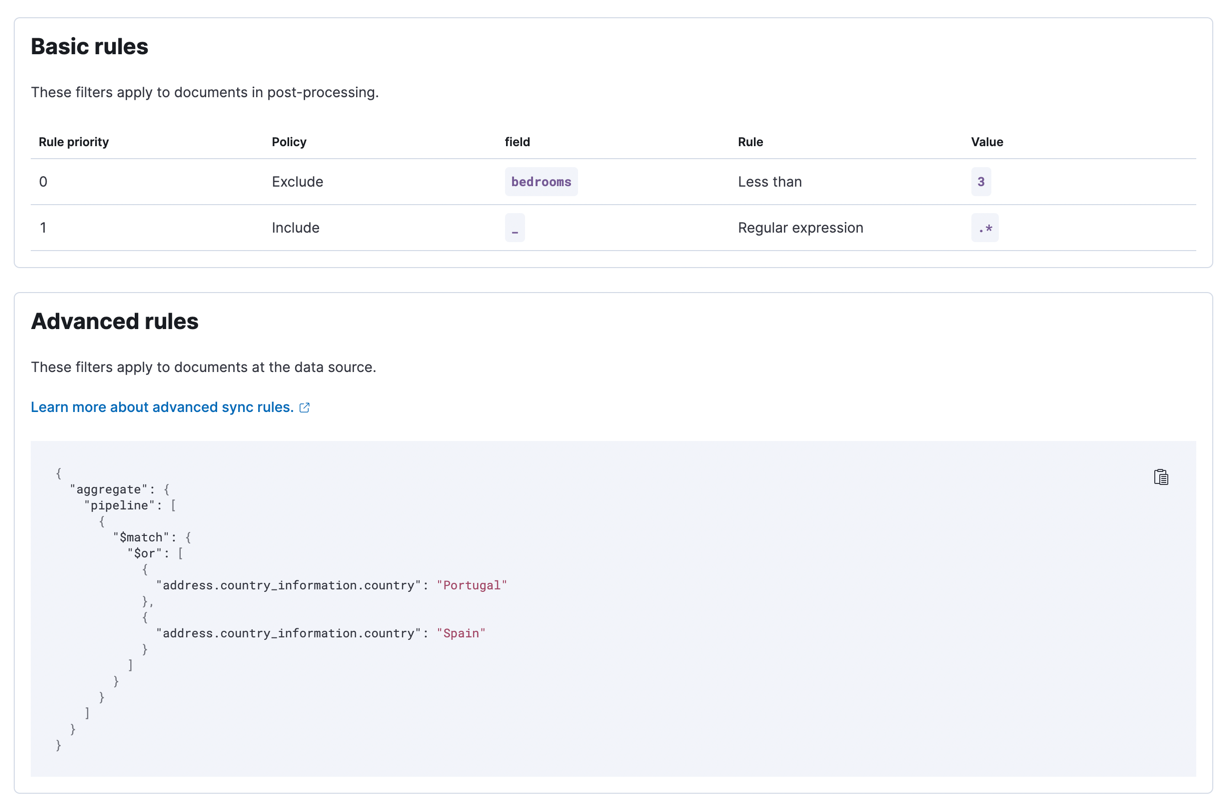

Basic rules

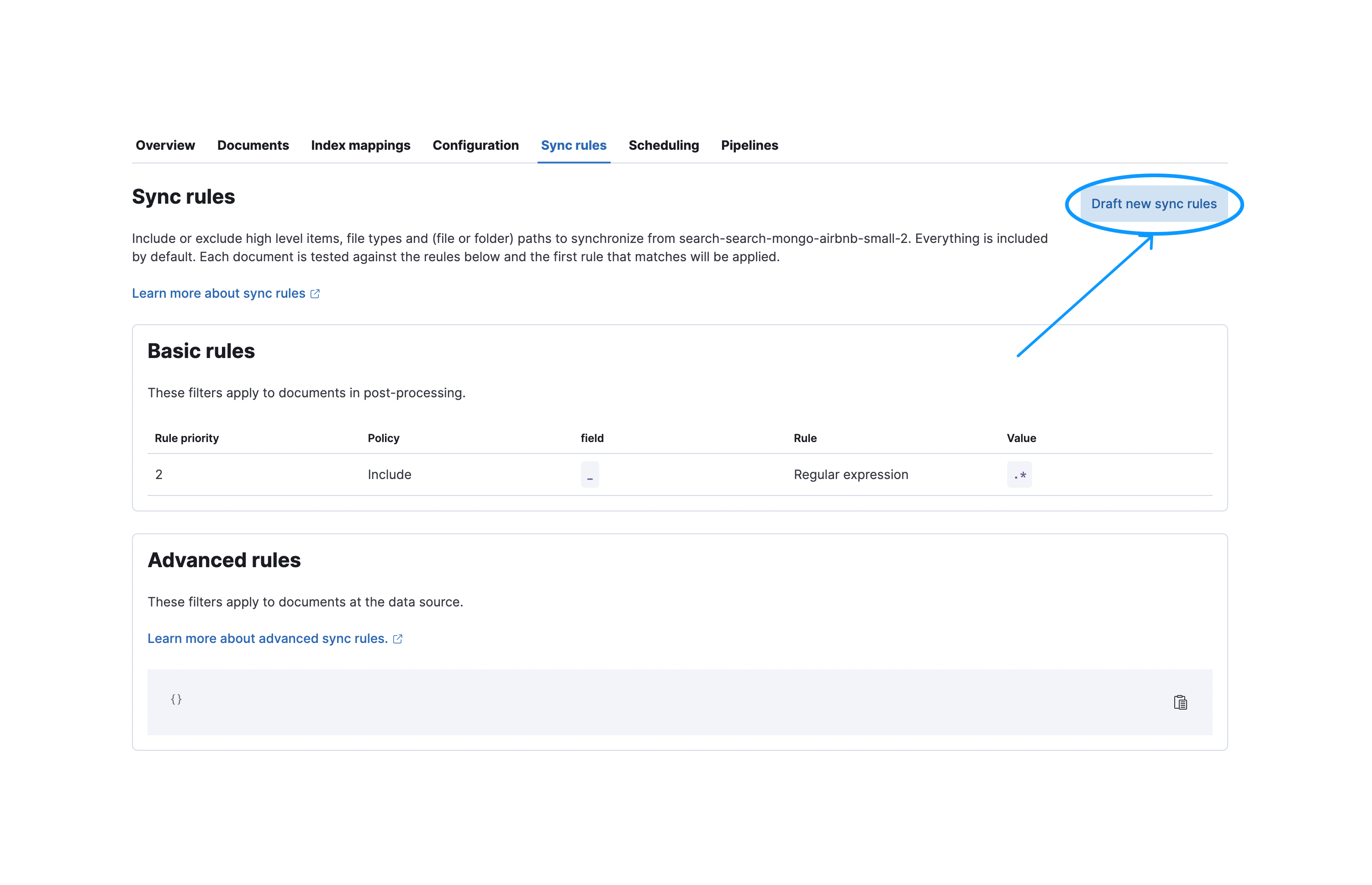

editTo create a new basic rule navigate to the Sync Rules tab and select Draft new sync rules:

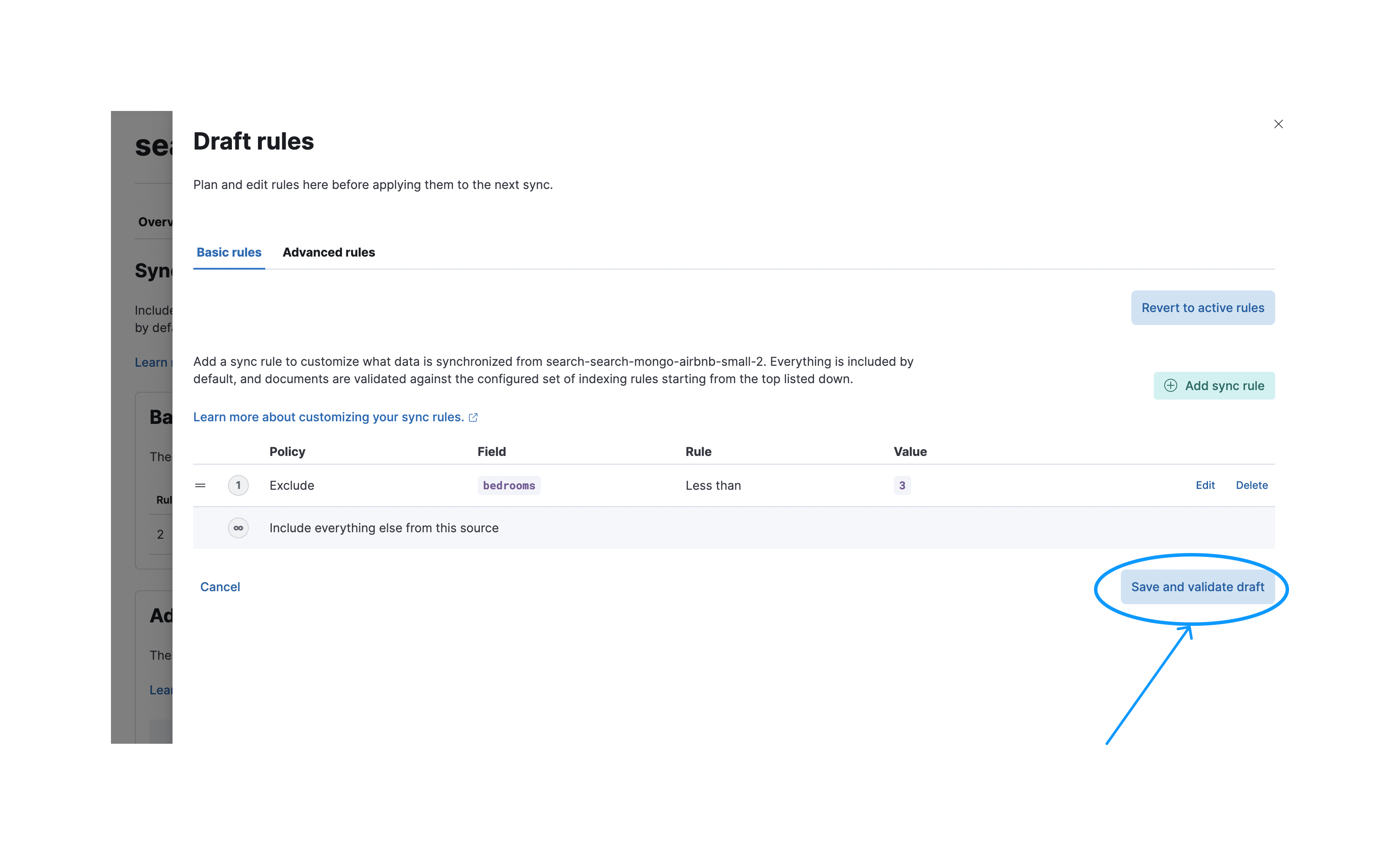

Afterwards you need to press the Save and validate draft button to validate these rules. Note that when saved the rules will be in draft state. They won’t be executed in the next sync unless they are applied.

After a successful validation you can apply your rules so they’ll be executed in the next sync.

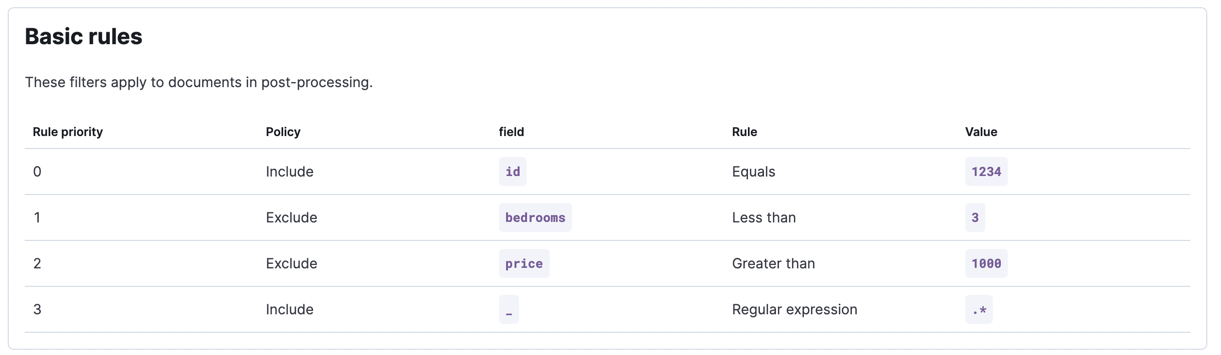

These following conditions can be covered by basic rules:

- The apartment with id 1234 should get included without considering the first two conditions

- Every apartment should have at least three bedrooms

- The apartments should not be more expensive than 1000/month

Remember that order matters for basic rules. You may get different results for a different ordering.

"Each apartment should be located either Portugal or Spain":

Advanced rules

editThe last rule can be implemented by leveraging advanced rules.

You want to only include apartments, which are located in "Portugal" or "Spain". We need to use advanced rules here because we’re dealing with deeply nested objects.

Let’s assume that the apartment data is stored inside a MongoDB instance. For MongoDB we support aggregation pipelines in our advanced rules among other things. An aggregation pipeline to only select those properties, which are located in Portugal or Spain would look like this:

[

{

"$match": {

"$or": [

{

"address.country_information.country": "Portugal"

},

{

"address.country_information.country": "Spain"

}

]

}

}

]

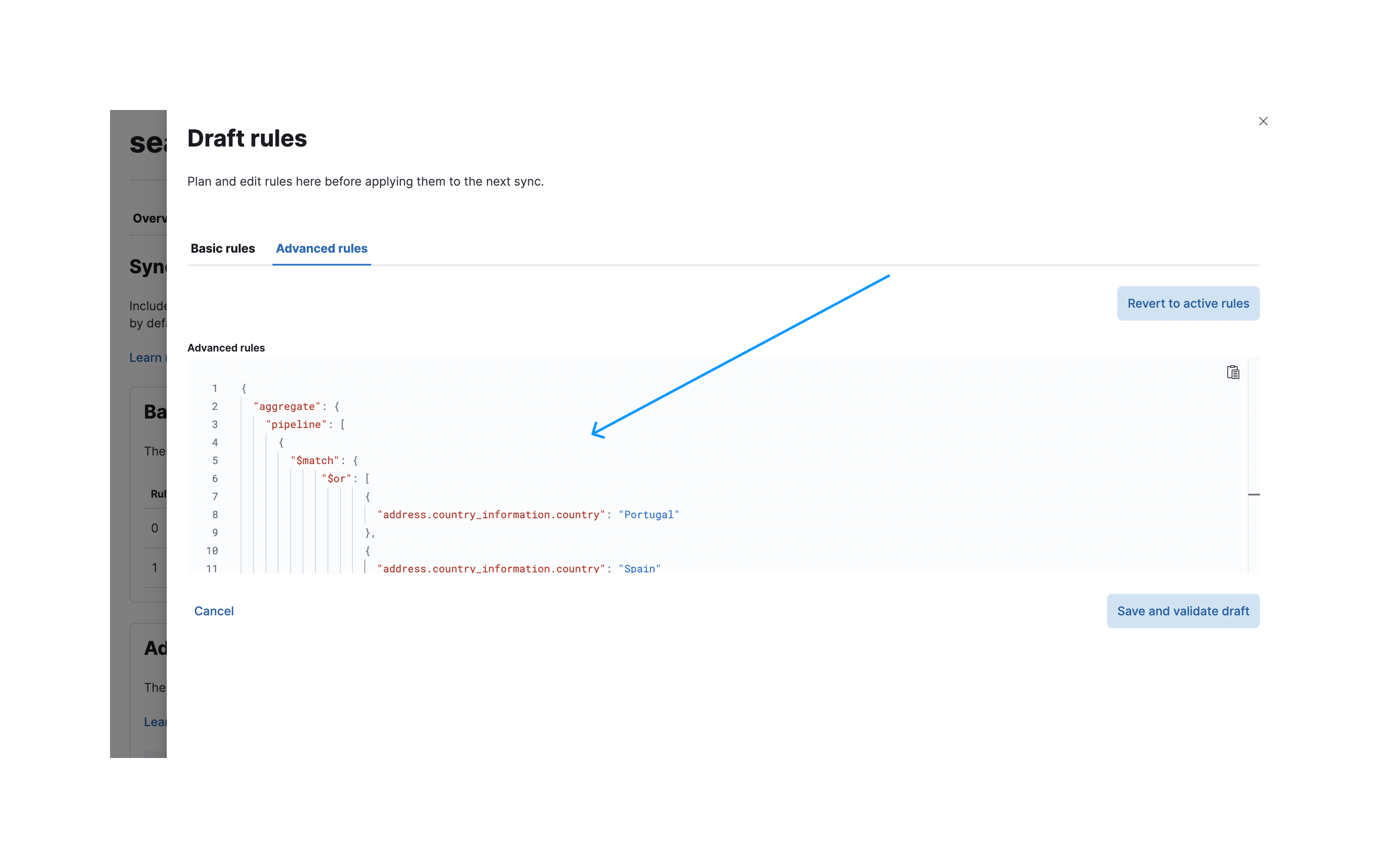

To create these advanced rules you navigate again to the sync rules creation dialog and select the Advanced rules tab.

You can now paste your aggregation pipeline into the input field under aggregate.pipeline:

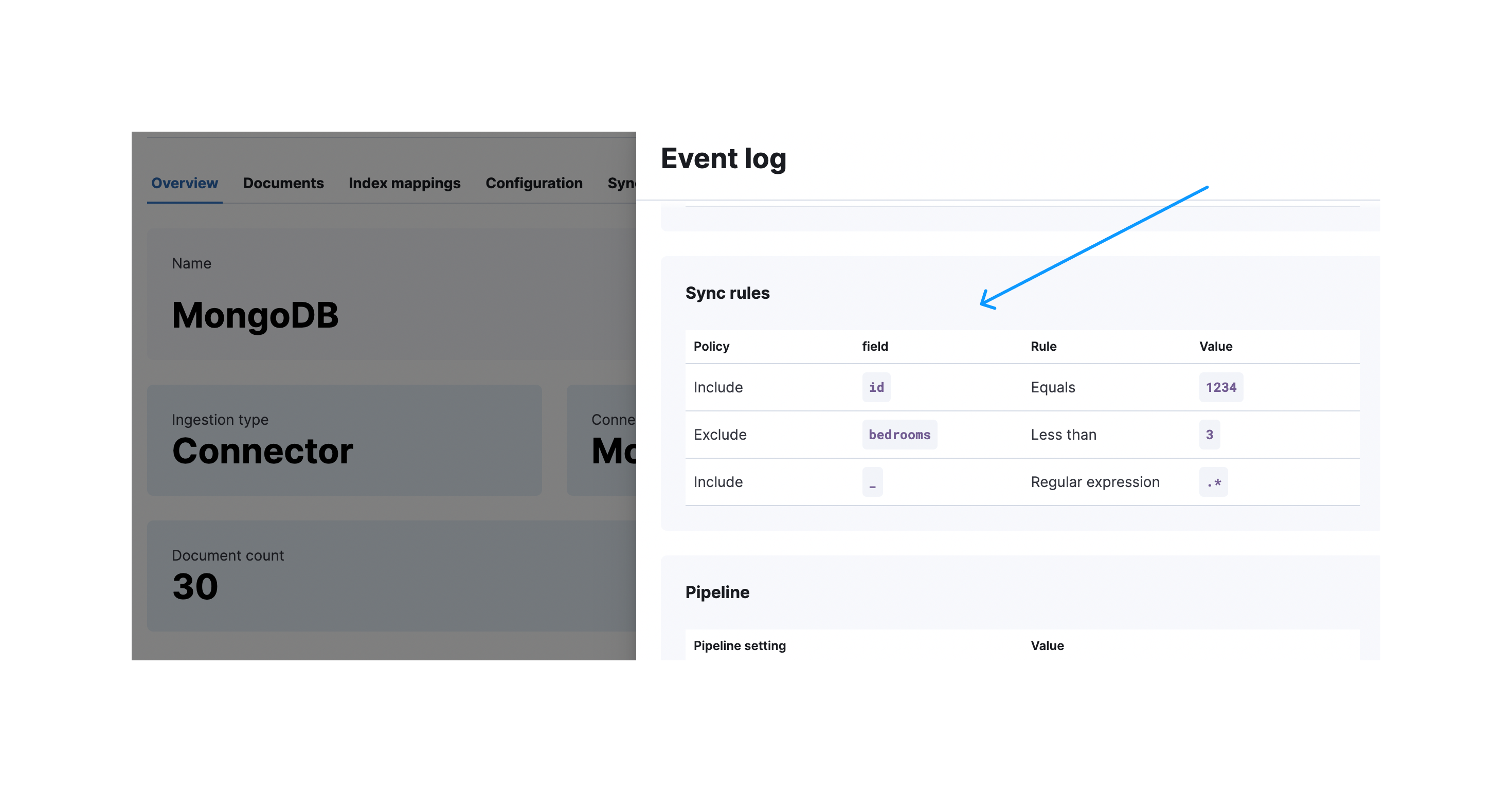

After a successful validation you can apply them again like you already for the basic rules. This view shows you the applied sync rules, which will be executed in the next sync:

After a successful sync you can expand the sync details to see which rules were applied: